USTC Reveals the Implicit Perception in Distinguishing Human and NLP-Produced Language

Natural Language Processing (NLP) refers to the technology that enables machines to interact and communicate with humans through natural language used in humans’ daily communication. This technology allows computers to express given intention and thoughts through natural language generation. In a study published in Advanced Science, Prof. ZHANG Xiaochu and his team from the University of Science and Technology of China (USTC) of the Chinese Academy of Sciences (CAS) demonstrated the crucial role of implicit neural information in language perception and comprehension by comparing the neural activity differences between human-produced and NLP-produced language. This study also provides promising ideas for evaluating the quality of natural language generation.

The NLP research community has long sought to generate language matching the quality of human-produced language. Despite the tremendous progress made in this field, evaluating the quality of NLP-produced language still poses significant challenges. Studies in the psychology of language suggest that language contains rich social and psychological information about the speaker, mainly processed by readers or listeners on an implicit level. Therefore, adding implicit perception information into the quality evaluation criteria of natural language generation is a promising potential direction.

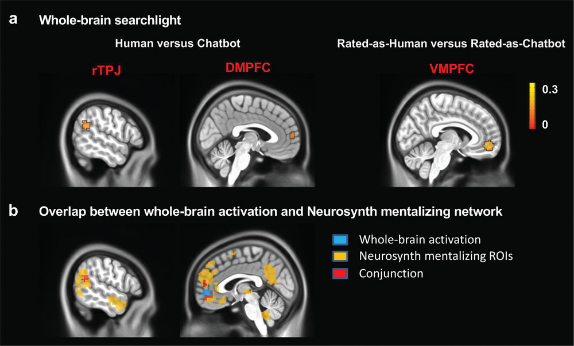

In this study, Prof. ZHANG's team collected the corpus of chatbots Google Meena and Microsoft XiaoIce as representatives of NLP-produced languages and human corpus as control materials. Functional magnetic resonance imaging (MRI) technology was used to record the participants' neural signals as they browsed and evaluated the two corpora. The analysis of the results found that when participants subjectively judged that the human corpus and the robot corpus were both human-like, the activation levels of the dorsomedial prefrontal cortex and the right temporoparietal junction area, which are the core areas of the brain's mentalizing network, can still significantly distinguish the source of the corpus. Neural activity evoked by corpus from different sources and with different judgments showed remarkable similarities across subjects.

The study found that the significantly activated brain regions overlapped with the mentalizing network of meta-analysis neuro-synthesis, indicating that the brain regions involved in the implicit perception while distinguishing between human-produced and NLP-produced language were indeed the mentalizing network

Whole-brain analysis and overlap analysis results. ((Image by WEI et al))

Whole-brain analysis and overlap analysis results. ((Image by WEI et al))

These findings suggest that the brain's implicit sensory neural signals are more sensitive to evaluative information than self-reports. Incorporating such information into evaluation criteria can help develop NLP technology. This study also provides a new perspective for developing a new Turing test to measure the level of artificial intelligence.

(Written by ZHENG Ruichen, edited by MA Xuange, USTC News Center)

Back